In our four-part blog series "How to feed your Chatbot", we highlight the topics of chatbots and voice assistants. In doing so, we would like to demonstrate how such assistants work in general, and of course, whether (and how) such assistants could be used for service and user information.

In this fourth and final part, we would like to ensure that our language assistant becomes more "socially acceptable". We identified the key use cases a long time ago. Possible questions for a voice assistant were elicited from the target audience. The voice assistant was then fed meaningful content. Perhaps the metadata concept has been refined, and a Content Delivery Portal was also introduced, so that the voice assistant could then demonstrate the relevant passages within the documentation, in the event of context-relevance.

So everything seems ready to go: The voice assistant can be brought online and it can delight the world with the information everyone’s been waiting for! But this is a very crucial moment. Once the voice assistant is online and live, the first people will want to try it out. If it doesn’t work as planned or if the target audience asks different questions than expected, then everyone would be disappointed. And persuading a disappointed target audience to give it another go after you make improvements is far more difficult than re-conjuring that initial magic all over again. You should therefore not hesitate to institute mandatory test phases such as an Alpha Test of the voice assistant and an overall usability test.

But how can voice assistants be tested properly? Making modifications is very difficult here. But in an Alpha Test you should do it in a very specific way, in order to find out — with the help of a few test users — whether everything technically worked as intended and if the voice assistant responded appropriately to canned questions or topics.

Essential before starting: Usability tests under real-world conditions

The Alpha Test should be followed by a Beta Test, which should be designed as a real usability test, with representatives from the target audience. This usability test should be scaled, just as the rest of the project is. Any cost-savings made during this critical step will be made painfully apparent right from the very first day of the voice assistant’s introduction. Sophisticated usability tests offer a wide range of options, such as surveys, documenting observations during use, and various other methods. The usability tests should also be geared toward your own key use cases. In the simplest case, you can grant your test users exclusive access to a trial version of the voice assistant and ask them to test the assistant under their real-world conditions. The results can be documented in a simple matrix: In which specific situation did you ask? What specifically did you ask? What answer did you receive? What answer did you expect? Such a matrix can be a valuable aid in optimising a voice assistant before it’s brought online.

After the initial launch, you should use the first few days to learn how users really use the voice assistant. For example, in the software we’ve used to build our Agent Smarty, we can check to see which anonymous questions people have asked that the voice assistant wasn’t able to answer. We can then assign the correct answer, so the voice assistant will continue to learn and become more accurate in its responses. This also allows for some initial conclusions to be made about the anonymous user statistics: How many questions are asked per session? After which specific questions do additional questions follow, and when is the target audience’s thirst for knowledge finally quenched? There are some opportunities for fine-tuning hidden here, which should be leveraged for regular revisions of the voice assistant.

Regular revisions or updates should also be strictly scheduled. Because, of course, the product offering will change, and so additional answers from the voice assistant will become necessary. In addition, users can regularly learn about their own requirements for both the information portfolio and the products, themselves.

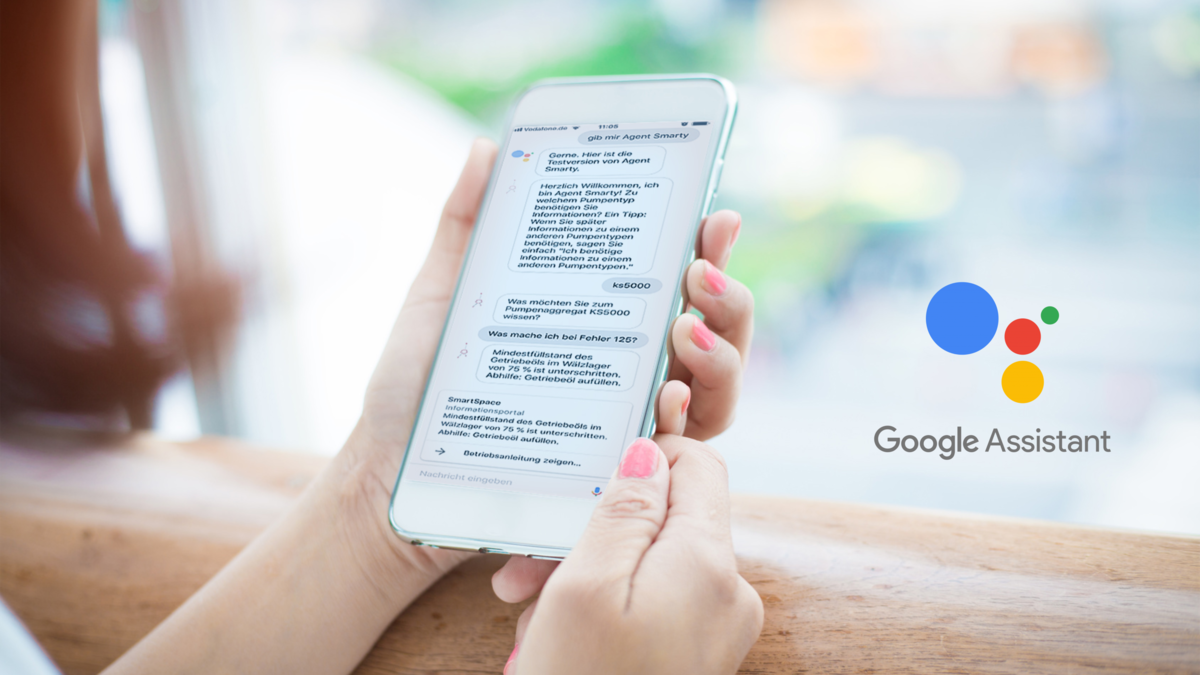

Curious now? So why not just try out what such a voice assistant for user information might look and feel like? All you need is the Google Assistant app and your Google Account. This will allow you to test our Agent Smarty voice assistant. It can answer questions whose answers can otherwise also be found in the operating manual. You can view the operating manual (after you register) on our smart space.

- Start the Google Assistant app and say "Okay Google, start Agent Smarty!"

- Answer the question about the "KS5000" Pump type.

- Now you can ask the voice assistant various questions about the smarty KS5000 Pump unit. For example: "What do I do if I receive Error 125?" or "How does the control unit work?"

Are you interested in having your own voice assistant for service and user information? Then please feel free to contact us.